.jpg)

Part 2: From Proof of Concept to Production — The AI Delivery Playbook

Escaping “Pilot Purgatory” requires more than better models; it requires better decisions. When leaders focus on trust, readiness, and business fit—not just technical feasibility—AI moves forward. The objective is a clean passage into production, where employees and customers actually benefit.

By shifting focus from "can we build this?" to "should we build this?" leaders can finally unlock their data’s true value. The goal isn't just to launch a pilot; it's to land the plane safely and let the passengers, your employees and customers, reach their destination.

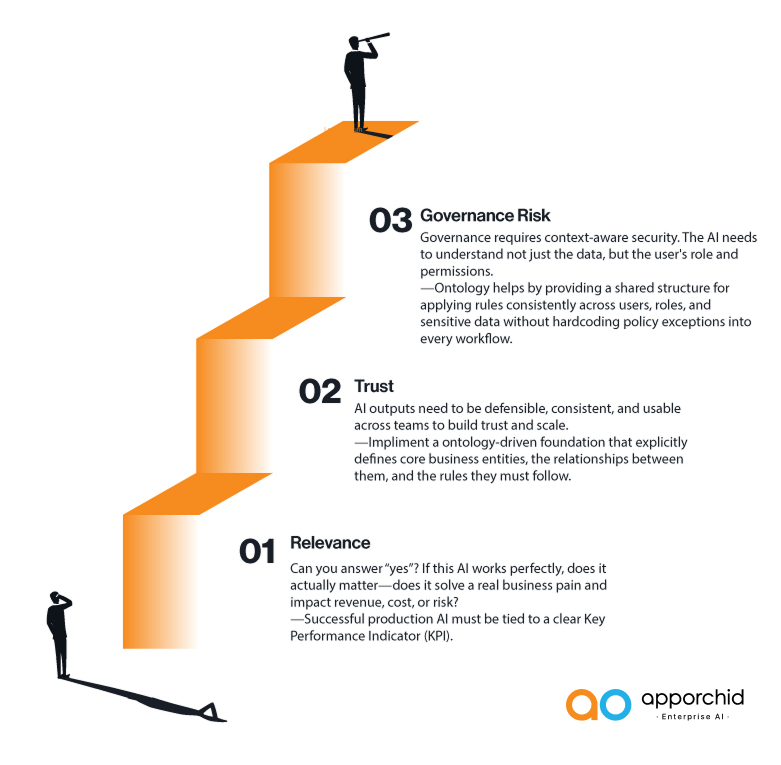

So how do enterprise AI projects cross the chasm from experiment to mission-critical application? To move from a fragile experiment to a robust production system, AI initiatives must pass through three critical "gates" that separate successful deployments from eternal pilots.

Relevance: Does the Use Case Move the Needle?

The first gate is all about business impact. Too often, AI projects are solutions looking for a problem. Organizations get caught up in the hype, building chatbots or predictive models because they can, not because they should.

Relevance asks the hard questions: If this AI works perfectly, does it truly matter? Does it solve a burning pain point for the business? Does it directly impact revenue, cost, or risk?

If the answer is vague, the project will likely lose momentum as the initial excitement fades. Successful production AI must be tied to a clear Key Performance Indicator (KPI). It shouldn't just be "nice to have"; it needs to be an essential tool that users rely on to do their jobs better.

Trust: Do Leaders and Users Trust the Output?

Trust is the currency of adoption, and trust can’t be established if the results aren’t accurate. You can build the most sophisticated neural network in the world, but if employees and executives alike don't trust its recommendations, they will ignore them.

In "Pilot Purgatory," users might see AI as a black box—inputs go in, magic happens, and an output comes out. Without explainability, skepticism grows. If a sales forecasting tool predicts a downturn, the Sales Director needs to know why. Is it based on historical seasonality? A competitor's price drop? Or a data error?

Building trust requires transparency. In 2026, the winning approach moves beyond “labels” toward an ontology-driven foundation that explicitly defines core business entities, the relationships between them, and the rules they must follow. This is what makes AI outputs defensible, consistent, and usable across teams. When users understand the why, they are more likely to trust the what.

But many enterprises never align on meaning. Different teams carry different definitions of the same concept such as “revenue,” “active customer,” “top performer,” and “margin.” And the AI inherits that ambiguity. When meaning is inconsistent, output trust collapses.

Governance Risk: The Access Dilemma

The final gate is governance. In the sandbox phase, security and access controls are often relaxed to speed development. But in production, giving an AI agent broad access to enterprise data is a massive risk.

What happens if an employee asks, "What is the CEO's salary?" or "List all confidential clients?” If AI has unrestricted access to the database, it will answer truthfully and disastrously.

Governance requires context-aware security. The AI needs to understand not just the data, but the user's role and permissions. For example, AI must understand that a manager can see salary bands, but an associate cannot. Failing to solve this governance puzzle is a primary reason legal and compliance teams kill projects before they go live.

Ontology helps by providing a shared structure for applying rules consistently across users, roles, and sensitive data without hardcoding policy exceptions into every workflow.

The Role of the Semantic Layer

So, how do we solve the trust, accuracy, and relevance problems? The answer often starts with something most pilots skip: ontology discovery.

App Orchid’s ontology discovery identifies, extracts, and formalizes the concepts, entities, relationships, and rules that already exist inside an organization’s data and workflows, often before anyone has documented them down explicitly. It gives meaning to each and every data point.

In short, ontology discovery turns implicit business knowledge into an explicit, shared semantic knowledge that AI can leverage to understand what it is looking for and how it is truly related to the business.

Think of your raw data as a library where every book is dumped on the floor in a chaotic, random pile. Some books are invaluable for your project; others are outdated, redundant, or irrelevant. The challenge isn’t just collecting data—it’s organizing it so you can quickly locate the exact sources that illuminate your problem, confirm assumptions, and drive decisions.

Ontology discovery transforms that unruly library into an aligned, navigable map of your business. The payoff is a data landscape that’s not a pile of independent datasets but a coherent, navigable map. With ontology discovery, your AI stops guessing and starts reasoning,with clarity, accountability, and scalable impact.

Most tools require teams to manually define concepts like “customer,” then map every system to that definition. That’s slow, brittle, and often becomes a permanent service effort. App Orchid approaches it differently. We can automatically construct an ontology by analyzing a company’s existing data stack, then validating it with humans.

Validation has two layers:

- A technical review to ensure mappings and data connections are correct.

- A business/domain review to confirm definitions match how the business actually operates (the same people who define KPIs and operating metrics).

This is what turns a pilot into a production system. Meaning is explicit, shared, and verified, not buried in tribal knowledge or locked inside model prompts.

An ontology-driven foundation bridges the gap between raw technical data and business logic. This drastically improves accuracy, reduces hallucinations, and ultimately builds the trust and control required to escape pilot purgatory.

To succeed, leaders must be explicit about which decisions require tight governance and where they must retain architectural freedom. The challenge for the modern enterprise is to capture the benefits of this structured decision-making without surrendering the ability to evolve independently over time.

Your Checklist for Moving to Production

If you are currently staring down a stalled POC or planning your next initiative, this executive checklist can help ensure that you build for production, not just for a demo.

- Define clear value: Have you identified a specific business metric this AI will improve? Is the use case critical to daily operations?

- Establish a semantic foundation: Are you feeding the AI raw, messy data, or have you built a semantic layer to provide context and definitions?

- Audit for governance: Have you defined role-based access controls? Does the AI respect user permissions inherently?

- Measure trust: Is there a mechanism for users to give feedback on AI outputs? Is the AI "explainable" enough for a non-technical user?

- Plan for maintenance: Who owns the model once it is live? Do you have a plan for retraining as data evolves?

- Confirm shared meaning: Do you have an ontology that defines your core business entities and relationships? Has it been validated by both technical and business reviewers?

If you’re ready to move beyond pilots, App Orchid can ensure that your data delivers lasting, meaningful outcomes for everyone involved.

The Best Path to

AI-Ready Data

Experience a future where data and employees interact seamlessly, with App Orchid.

.png)